A SINGLE Graphics Card = 1 teraFLOPS

Message boards : Number crunching : A SINGLE Graphics Card = 1 teraFLOPS

Previous · 1 · 2 · 3 · Next

| Author | Message |

|---|---|

|

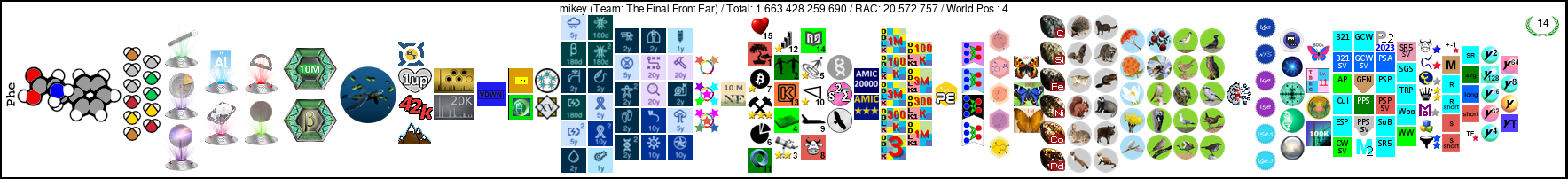

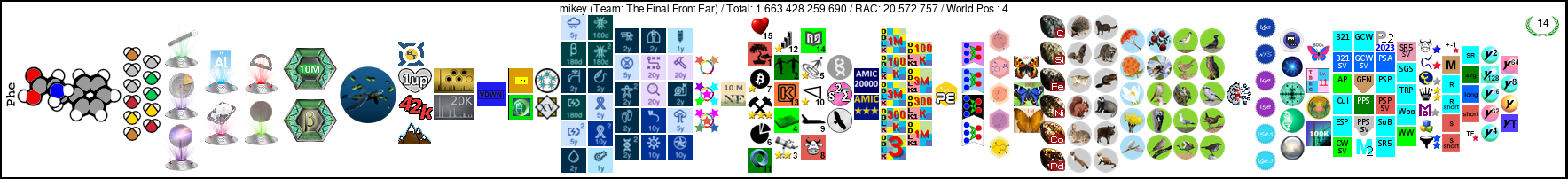

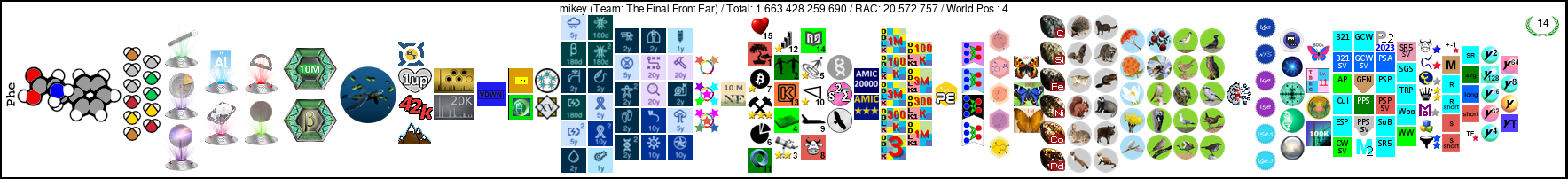

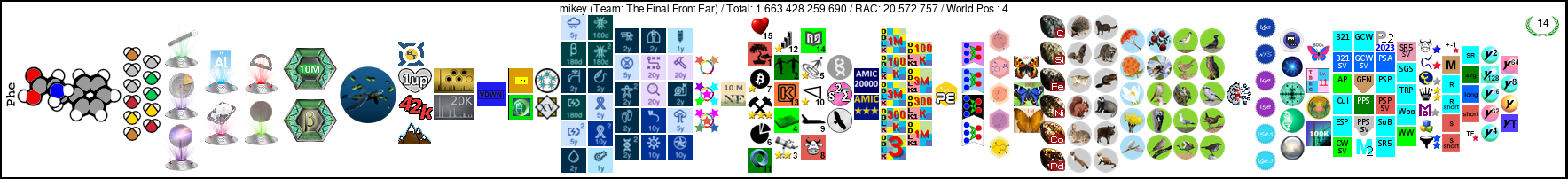

mikey Send message Joined: 5 Jan 06 Posts: 1899 Credit: 12,884,078 RAC: 1,567 |

I tried doing GPU grid and it stopped my Boinc crunching, with one of the cpus. I am using verion 6.4.5 and i know there are newer version that address some of these issues, but I really want to crunch for Rosetta with my GPU. Einstein would be okay too but currently neither one supports video card crunching. I am back to what works for me, at least for now. I guess I am in for another test day! Thanks

|

Paul D. Buck Paul D. BuckSend message Joined: 17 Sep 05 Posts: 815 Credit: 1,812,737 RAC: 0 |

I tried doing GPU grid and it stopped my Boinc crunching, with one of the cpus. I am using verion 6.4.5 and i know there are newer version that address some of these issues, but I really want to crunch for Rosetta with my GPU. Einstein would be okay too but currently neither one supports video card crunching. I am back to what works for me, at least for now. Me too ... I ran out of disk space and the drive started to develop directory errors which the tools won't fix because there is not enough space ... so I bought a set of 6 1.5 TB drives ... two of them are failing SMART ... and with only 4 I cannot rebuild my RAID 5 array as I used one for the OS ... so, do I re-install the OS for the 5th time (I installed it on one of the failing drives first, sigh) and go through all the work and time to recover all my settings (Again) so I can use the 4 drives that seem to work? Sigh ... Anyway, BOINC Manager 6.5.0 is not perfect, but it is close as these more modern versions go. the next best version is 6.2.19 then 6.4.5 ... none of the 6.6.x versions will work long term. They each have issues that if they don't show up right away will soon bite you ... You can look at the long list of threads over at GPU Grid for more. I am using 6.5.0 on all my GPU powered systems and have no specific complaints though at times it looks like the work fetch is not keeping the queue properly filled. However, in my case I am doing a concentrated effort on several projects in succession so that is less of an issue as I slowly run down my list of projects to "force up" my totals ... and as I get them to target levels fall back to support levels of Resource Share.... Anyway ... good luck with the tests ... |

|

mikey Send message Joined: 5 Jan 06 Posts: 1899 Credit: 12,884,078 RAC: 1,567 |

I tried doing GPU grid and it stopped my Boinc crunching, with one of the cpus. I am using verion 6.4.5 and i know there are newer version that address some of these issues, but I really want to crunch for Rosetta with my GPU. Einstein would be okay too but currently neither one supports video card crunching. I am back to what works for me, at least for now. Choices, choices, so many for you to chose from. I am assuming you have a disk image of your settings for your back up? Personally I would not install anything I use regularly on a known bad drive. I do install Windows and Boinc on bad drives BUT ONLY on Boinc only machines. That way if they crash, again, I really don't care and have only lost that one machine and the units on it. I put a mark on the drive when it crashes, after 2 marks the drive is wiped and put in a pile to have a hole drilled in it. I went to download Boinc 6.5.0 and it is not on the list of available downloads. I looked at several Boinc projects and just don't see it. I click on all versions and it goes from 6.4.5 to 6.6.4. I have tried 6.4.5 and did not care for it, as discussed earlier, is 6.6.4 a viable option?

|

Paul D. Buck Paul D. BuckSend message Joined: 17 Sep 05 Posts: 815 Credit: 1,812,737 RAC: 0 |

Choices, choices, so many for you to chose from. I am assuming you have a disk image of your settings for your back up? Personally I would not install anything I use regularly on a known bad drive. I do install Windows and Boinc on bad drives BUT ONLY on Boinc only machines. That way if they crash, again, I really don't care and have only lost that one machine and the units on it. I put a mark on the drive when it crashes, after 2 marks the drive is wiped and put in a pile to have a hole drilled in it. I do have a back up ... but, am still setting things up. I bought 6 new drives to have 2 of them fail SMART tests right away. So, they will be going back tomorrow. So, I am cleaning off one disk to make it the start up disk and that will let me take the one 1.5 TB that I put the OS on and put it into the RAID array. I did build an array with the other three 1.5 TB disks so they should be in good shape. Another 4-6 hours moving files off the 500G drive will clear it and I can use a clone copy to move the files off the current start up disk to the old data disk ... then boot off the new start up disk and make sure that works ... then take the drive out put it into the right slot, whack the RAID array and build it anew ... THEN I can start to restore the data off the back up drives to the RAID array ... When all that is done and I am confident that the data set is good I can take the 4 old 1 TB disks of the old RAID array and use them as disks else where ... like to make a larger clone of the start up disk and to have an "overflow drive" for stuff that is not that important to have on the RAID array ... And when summer is here and I may build another system I will have a spare drive. I went to download Boinc 6.5.0 and it is not on the list of available downloads. I looked at several Boinc projects and just don't see it. I click on all versions and it goes from 6.4.5 to 6.6.4. I have tried 6.4.5 and did not care for it, as discussed earlier, is 6.6.4 a viable option? 6.64 is a bad choice. 6.5.0 is the best alternative I have found. I have been using it for a month or so now and have no complaints though I think the work fetch is still unbalanced and it does not really properly handle CUDA vs CPU yet ... but with futzed Resource Shares I keep CUDA work on hand and that is good enough for now ... There is a hidden page where you can get older versions... I always have to hunt around for it ... AH, try this |

|

mikey Send message Joined: 5 Jan 06 Posts: 1899 Credit: 12,884,078 RAC: 1,567 |

Choices, choices, so many for you to chose from. I am assuming you have a disk image of your settings for your back up? Personally I would not install anything I use regularly on a known bad drive. I do install Windows and Boinc on bad drives BUT ONLY on Boinc only machines. That way if they crash, again, I really don't care and have only lost that one machine and the units on it. I put a mark on the drive when it crashes, after 2 marks the drive is wiped and put in a pile to have a hole drilled in it. Thank you for the link, I have downloaded it and I will try it. Also good luck on the Raid array. I tried doing a Raid one time but it never worked as it should have.

|

Paul D. Buck Paul D. BuckSend message Joined: 17 Sep 05 Posts: 815 Credit: 1,812,737 RAC: 0 |

Thank you for the link, I have downloaded it and I will try it. Well, cleared a 500G drive and am now cloning the current boot drive to the cleared drive ... The RAID array has been reasonable, expensive and difficult to work with at times, but, it pretty much works if you have the stars and moon aligned. One thing that got into the way was that the battery was being 'conditioned' and with installs I was rebooting so much that this process would have to start over ... finally I was in a state that it could finish ... and with two new drives failing SMART ... well, that did not help matters. I did get 3 drives to make a RAID 5 array which I will now have do whack to add the additional drive. At the moment I use the Apple RAID card for a RAID-5 array and SoftRAID for several stripe or mirror arrays ... I use a stripe array for my backup ... which is one place that I wish that SoftRAID would get their finger out and make a RAID 5 addition to their product ... at the moment all you can do for safety is a mirror with their software. sigh ... And good luck to you with 6.5.0 ... you can find the link any time you need it ... just use the download page and copy the link of any of the downloads and strip off the file name and there you are ... or you could copy the 6.6.4 and change the numbers to the version you want to capture ... Cheers ... |

|

Resnick_MEDIC_Lab Send message Joined: 5 Jun 06 Posts: 2751 Credit: 4,276,053 RAC: 0 |

G-d bless some of those "crazy" people over at Folding@Home (and the obligatory: "yes", it CAN play Crysis!) Atlas Folding Blog - Fighting Huntington's Disease with Folding@home

|

Greg_BE Greg_BESend message Joined: 30 May 06 Posts: 5770 Credit: 6,139,760 RAC: 0 |

whats the cost for the hardware and what is power consumption for that beat? |

|

Resnick_MEDIC_Lab Send message Joined: 5 Jun 06 Posts: 2751 Credit: 4,276,053 RAC: 0 |

And apparently he's paying Canadian electric rates:

Further noting:

|

Greg_BE Greg_BESend message Joined: 30 May 06 Posts: 5770 Credit: 6,139,760 RAC: 0 |

main frame in a box at home...to much up front cost for my lowly budget. very nice to look at though

|

|

Pharrg Send message Joined: 10 Jul 06 Posts: 10 Credit: 6,478 RAC: 0 |

Wow! Must be nice. Of course, I don't have a $15,000 budget. However, I currently have a pair of GTX 260's, and the speed gain I've seen on some CUDA enable projects have been so impressive, I'm thinking of replacing them with 3 GTX295's. My motherboard will support true 3-way SLI with all 3 slots at full x16. Of course, when running CUDA, you have to disable SLI or only 1 card gets used, but that's a simple mouse click to do. As for speeds.... 3 nVidia GTX 295's... Each board has 480 cores in parallel and almost 2Gb of dedicated DDR3 RAM. Each is capable of 1.788 Teraflops of processing power. Altogether, by running 3, this will give my machine 1440 cores and a whopping 5.364 Teraflops of power! That's faster than many early Cray supercomputers still in operation were! And, it will leave your CPU nearly idle for doing other tasks! Do the math to see what his machine is going to be capable of... now that's extreme! Lots of great science can be done with these leaps of processing power. If AMD doesn't go bankrupt soon, perhaps the ATI Firestream technology will take off too, or perhaps OpenCL. |

|

Pharrg Send message Joined: 10 Jul 06 Posts: 10 Credit: 6,478 RAC: 0 |

Oh, I forgot, in my limited testing with CUDA processing, I've traced pretty much all errors I've seen, including 'nvlddmkm' driver errors, driver recovery errors, BSOD's, and other crashes when trying to run CUDA to temperatures. This is especially a problem on the high end GTX 200 series of cards. Even with the cards being two slots thick, they just can't squeeze a big enough heatsink and fan into it. Just look at how large CPU coolers have gotten in comparison for much less computing power. I installed a Cooler Master V8 cooler on my Core i7 and that thing is a monster. But, I must admit, it works extremely well. In fact, it keeps my CPU cooler under a load than the stock cooler kept it at idle. I found that once I maxed out my fan speeds and cooling on the video cards, the errors stopped. Many extreme gamers have run into the same issues on these boards as well. Lots of people try to blame the software or GPU's themselves, when really it's a simple case of overheating. Even games like Crysis with all settings maxed are nothing compared to what CUDA is capable of doing to a GPU when efficient code is used. The type of algorithms and processes will make a difference too, so you'll see different results from different projects and apps. It will drive your card hard. Like I said, once I figured this out and worked to keep my cards cool, I haven't had a crash since. |

|

Resnick_MEDIC_Lab Send message Joined: 5 Jun 06 Posts: 2751 Credit: 4,276,053 RAC: 0 |

From our friends at Folding@Home: Folding@home passes the 5 petaflop mark

and New paper #63: Accelerating Molecular Dynamic Simulation on Graphics Processing Units

Here are some "fair use" tid-bits:

|

Paul D. Buck Paul D. BuckSend message Joined: 17 Sep 05 Posts: 815 Credit: 1,812,737 RAC: 0 |

Just as interesting to me is the offer when I installed the drivers for my brand new ATI card to install Folding@Home ... now why did not the BOINC guys get us on that list? I did not install FaH, as I was solely interested in getting Milkyway to run a shade faster ... sadly, and most disappointing, it takes up to 13-14 seconds to run a task ... almost not worth getting an ATI GPU ... On the other hand, aside from the Linux system which I should be turning off, all my systems now have a GPU running work for me ... Still need more project though ... GPU Grid won't let you stock up more than 4 tasks and my one system will run through those in 6 hours, so, even a half day outage means I run dry ... sigh ... |

|

Resnick_MEDIC_Lab Send message Joined: 5 Jun 06 Posts: 2751 Credit: 4,276,053 RAC: 0 |

miklyway, isnt that rpi's project? my s/o is an alum from there. whats the deal with gpugrid? tried it with a 9600 gso on a older amd monocore, and it tied up boinc... when gpugrid was running on the gpu, it was also the only wu running on boinc, displacing rosie. thought i could have gpugrid on the gpu, and rosie on boinc, both at the same time... just out of curiosity, since i've never actually finished a gpugrid wu, how are they on points? |

|

mikey Send message Joined: 5 Jan 06 Posts: 1899 Credit: 12,884,078 RAC: 1,567 |

Just as interesting to me is the offer when I installed the drivers for my brand new ATI card to install Folding@Home ... now why did not the BOINC guys get us on that list? BUT at least one Project is trying to get more people. It takes someone to be the first, hopefully this is but the first ripple in the tidal wave to come!

|

Paul D. Buck Paul D. BuckSend message Joined: 17 Sep 05 Posts: 815 Credit: 1,812,737 RAC: 0 |

miklyway, isnt that rpi's project? my s/o is an alum from there. OK, if you run 6.4.5 or earlier you have to use a cc_config file to make things work as they should. You have to run at least 6.2.19 (I think). If you listen to Paul (few do), you will run 6.5.0 on windows which does not require any special configuration files. Video drivers, win32 should be 181.22 ... Then, you should be good to go... On my i7 I usually have 14 tasks in flight ... 8 CPU tasks, 4 GPU tasks (2 each GTX295 cards), QCN and FreeHAL all at the same time ... Each GPU Grid task takes between 4 and 30 hours depending on the card and the task and pays 2K and change to 3K and change ... With my i7, Q9300, 2 Dual CPU (HT capable) Dells, and my Mac Pro I was doing about 12-20K per day (24 cores total) ... I added a total of 6 GPU Cores (9800GT, GTX 280, 2 Ea. GTX 295 (2 cores per)) and my total went up to 60-80 some K per day (See Willy's Stats for Paul D. Buck (the earliest stats show some GPUs running already). With the ATI card I am jumping up again. As it has run for less than 24 hours and I was still doing work on other computers not sure what the ATI card is going to do per day yet... So, by populating all the PCI-e slots I got I have increased production (as measured by CS) by a factor of 4 or more ... Your 9600 card will likely take longer than my 9800 GT and I would expect that it would take about 20-24 hours per task or maybe a little more. Still, it is "free" in the sense that you can add production just by attaching to one more project. So, step one, make sure you are running the right drivers, two, 6.5.0 BOINC Manager, 3) attach to GPU Grid, 4) rake in the work ... :) |

|

Resnick_MEDIC_Lab Send message Joined: 5 Jun 06 Posts: 2751 Credit: 4,276,053 RAC: 0 |

trouble finding link to Boinc Manager 6.5.0. currently have 6.4.5 = no go with cpu + gpu. |

Paul D. Buck Paul D. BuckSend message Joined: 17 Sep 05 Posts: 815 Credit: 1,812,737 RAC: 0 |

trouble finding link to Boinc Manager 6.5.0. You have to use the generic download list to find the version you need. I always go to the DL page, copy the download link they present and then paste it cut off the file name to get the generic list. {edit} Don't forget to remove that config file when using 6.5.0 as it is not needed. BTW, teh MW app is so alpha that it is not affected by the manager version ... |

|

Resnick_MEDIC_Lab Send message Joined: 5 Jun 06 Posts: 2751 Credit: 4,276,053 RAC: 0 |

ok, will play with tomorrow. want to leave my three ps3's on f@h, and try my two gpu's on boinc gpugrid. have seven more gpu's (all 9600 series, got 'em cheap) eventually to come online. |

Message boards :

Number crunching :

A SINGLE Graphics Card = 1 teraFLOPS

©2026 University of Washington

https://www.bakerlab.org